Radiology is the flagship use case for medical AI. The sector attracts more venture funding than any other medical specialty, and the FDA has cleared 400+ AI/ML algorithms across all of healthcare—radiology accounts for 25-30% of them. Yet the diagnostic core of radiology remains 0% automated by AI. Why? Because the regulatory and legal structure forbids it. This score, 5.1/10 in the Transformation zone, reflects a profession where AI reshapes the administrative and documentary periphery while the interpretive center remains human-in-the-loop by law.

🟠 5.1/10 – TRANSFORMATION

5.1 out of 10 places radiology firmly in the Transformation zone. The score reflects a specialty where AI augments every supporting task—report generation, equipment selection, quality control, continuing education—while the authoritative diagnostic decision remains the radiologist's alone. This is not a strategic choice by the profession. It's a regulatory and liability requirement hardcoded into FDA guidance and malpractice law. Every production AI radiology tool on the market positions itself as a radiologist-in-the-loop system, not an autonomous diagnostic engine.

🔴 Threatened Tasks

🟠 1. Provide advice on types or quantities of radiology equipment needed. Equipment selection, procurement strategy, and capital planning now routinely leverage AI cost-benefit analysis, lifecycle forecasting, and departmental workflow simulation tools. Radiologists advising on modality selection can ground recommendations in AI-supported data.

🟡 2. Participate in continuing education activities. AI platforms now generate personalized learning pathways and case review summaries, reducing the time radiologists spend on manual continuing education workflows. Certification prep and board review accelerated by AI systems.

🔴 3. Prepare comprehensive interpretive reports of findings. Rad AI and similar platforms generate structured radiology reports from preliminary findings, reducing report turnaround time from 30-60 minutes to 5-10 minutes of radiologist review and sign-off. FDA-cleared for report drafting and documentation.

🟠 4. Develop treatment plans for radiology patients. Imaging-informed treatment recommendations benefit from AI-assisted literature synthesis and guideline integration. Tumor board preparation, staging documentation, and follow-up scheduling increasingly AI-supported.

🔴 5. Review or transmit images and information using picture archiving or communications systems. Aidoc and Viz.ai handle PACS/RIS integration, critical finding prioritization, and worklist routing. Radiologists focus on interpretation; AI handles information flow and triage.

🟠 6. Develop or monitor procedures to ensure adequate quality control of images. ACR Accreditation-aligned QA protocols now generated and monitored by AI systems. Image quality assessment, dose tracking, and equipment performance testing benefit from automated flagging and historical trend analysis.

🔴 7. Perform or interpret the outcomes of diagnostic imaging procedures (worklist triage, not autonomous diagnosis). AI algorithms flag critical findings and triage cases for expedited radiologist review. The triage layer is AI-automated. Final interpretation remains radiologist-exclusive.

🟢 Resistant Tasks

1. Obtain patients' histories from electronic records, patient interviews, dictated reports, or by communicating with referring clinicians. Clinical correlation requires human judgment, contextual understanding, and real-time communication with physicians. No AI replaces this gatekeeping function.

2. Instruct radiologic staff in desired techniques, positions, or projections. Real-time feedback, patient positioning, protocol adaptation, and troubleshooting require human supervision and physical demonstration.

3. Confer with medical professionals regarding image-based diagnoses. Face-to-face consultation with referring physicians, specialists, and surgeons is non-delegable. Clinical decision-making happens in these conversations, not in the report alone.

4. Coordinate radiological services with other medical activities. Departmental scheduling, clinical integration, surgical case support, and interdisciplinary workflow require human coordination and judgment.

5. Establish or enforce standards for protection of patients or personnel. Safety protocols, radiation dose optimization, contrast agent safety, and incident reporting are human-supervised regulatory obligations.

6. Recognize or treat complications during and after procedures. Interventional radiology, adverse event management, patient assessment, and real-time clinical decision-making during procedures are exclusively human-performed.

7. Administer radiopaque substances by injection, orally, or as enemas. Contrast administration, patient assessment for allergies and renal function, and monitoring for adverse reactions are hands-on clinical tasks.

Recommended AI Tools

| Tool | Usage for Radiologist | Pricing |

|---|---|---|

| Rad AI | Generates structured radiology reports from preliminary findings. FDA-cleared for report drafting. Reduces report turnaround from 30-60 min to 5-10 min review + sign-off. | Enterprise pricing |

| Aidoc | Flags critical findings and routes high-confidence cases to radiologist triage. Worklist prioritization, expedited review. FDA-cleared. Deployed 200+ hospitals. | Enterprise pricing |

| Viz.ai | Integrates into PACS/RIS systems. Identifies stroke and PE candidates for radiologist triage. Multiple FDA 510(k) clearances. 250+ hospital sites. | Enterprise pricing |

Free Prompt: Claude

| Tool | Claude (Free / $20/mo Pro) |

| When to Use | After completing diagnostic imaging interpretation; initial report structure for radiologist review and sign-off |

| Outcome | A structured radiology report (INDICATION, TECHNIQUE, FINDINGS, IMPRESSION, DIFFERENTIAL) using standard terminology and ACR format. Ready for radiologist review and electronic signature. Saves 8-15 minutes per report on initial structuring. |

The Prompt:

You are a senior radiologist drafting a structured diagnostic radiology report following ACR (American College of Radiology) reporting guidelines and using RadLex standardized terminology. From the imaging findings, patient context, and applicable standards below, generate a structured radiology report with these sections: 1. INDICATION (Clinical question or reason for exam) 2. TECHNIQUE (Equipment, parameters, contrast if applicable) 3. FINDINGS (Systematically describe all relevant observations using RadLex terms) 4. IMPRESSION (Concise summary; use standard terminology) 5. DIFFERENTIAL DIAGNOSIS (if applicable) (Ranked by likelihood with brief rationale) Structure FINDINGS by anatomic region: - For each region, state the finding in RadLex terms (e.g., "no focal consolidation," "ground-glass opacity," "hypodense lesion") - Include measurements for lesions (long/short axis or diameter) - Quantify extent (e.g., "10% of lung volume") For IMPRESSION, use the appropriate standard: - Mammography: BI-RADS category (0–6) - Lung CT: Lung-RADS category (1–4) - Thyroid: TI-RADS category (1–5) - Generic: ACR format (finding + significance + recommendation) Example (chest radiograph): INDICATION: Cough, possible pneumonia TECHNIQUE: Frontal and lateral chest radiograph, portable, no prior comparison. FINDINGS: - Lungs: Ill-defined ground-glass opacities in the left mid and lower lobes, approximately 4 cm × 3 cm, with air bronchogram sign. No pneumothorax or pleural effusion. - Heart: Normal size. - Mediastinum: No shift or widening. IMPRESSION: Left lower lobe pneumonia. Recommend clinical correlation and follow-up radiograph in 4–6 weeks. --- [FINDINGS SUMMARY]: [INSERT YOUR IMAGING FINDINGS AND MEASUREMENTS] [PATIENT CONTEXT]: [INSERT AGE, CLINICAL HISTORY, PRIOR EXAMS] [APPLICABLE STANDARDS]: [INSERT BIRADS / LUNGRADS / TIRADS / RADLEX REFS] Do not invent findings or measurements. If a region was not evaluated, flag [NOT REVIEWED]. If findings conflict with the clinical history, flag ⚠️ and note the discrepancy. Do not make or imply treatment recommendations beyond standard follow-up protocols.

Why It Works: This prompt structures YOUR imaging findings into a professional radiology report using ACR format and RadLex standardized terminology. It does NOT interpret the images, generate clinical diagnoses, or make treatment recommendations. A radiologist completes the interpretation; this prompt ensures the documentation is structured, compliant, and ready for signature.

Pro Tip: Use this when you've finished reading a study and need to produce a report on deadline. It eliminates formatting delays and ensures all required sections (INDICATION, TECHNIQUE, FINDINGS, IMPRESSION) are present and clinically organized.

Found this useful? Share it with a colleague.

This scorecard is 100% free — all sections unlocked.

Starting next week, deep analysis (5 criteria breakdown, career horizon, 4 additional AI prompts) will be reserved for paying subscribers. You just saw everything the paid tier includes. If it’s worth $10/month to you, subscribe now and lock in the founding rate.

The Five Scoring Criteria: Deep Dive

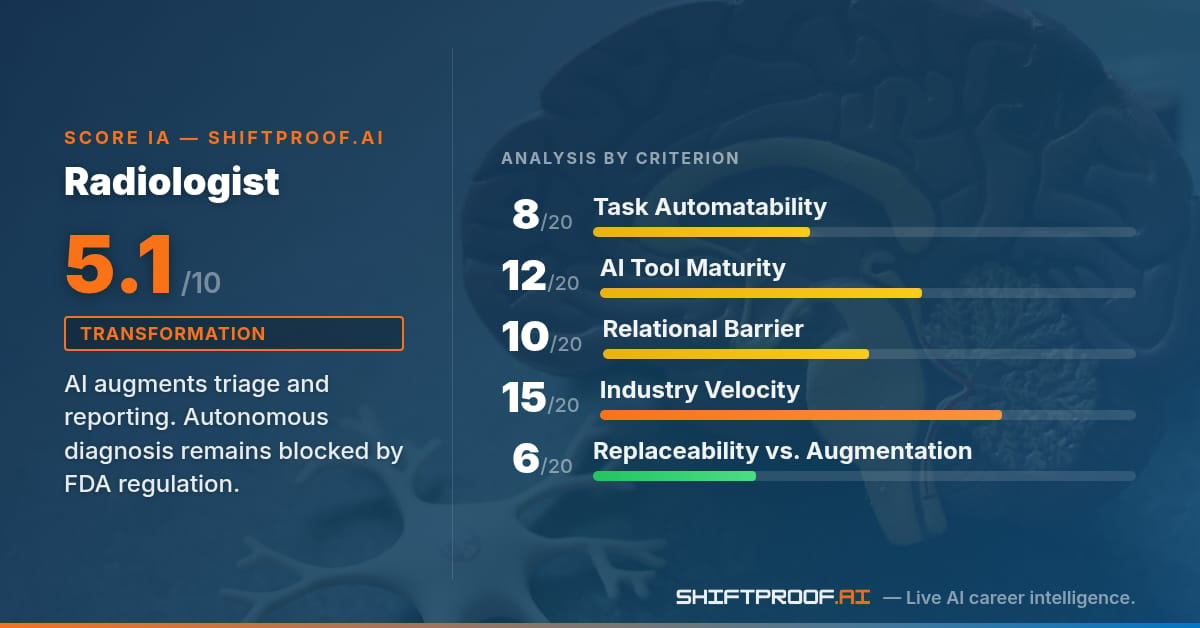

1. Task Automatability — 8/20

72% of a radiologist's 29 O*NET tasks carry some level of observed AI exposure. The highest-exposed tasks are providing advice on radiology equipment selection, participating in continuing education, preparing interpretive reports, developing treatment plans, reviewing PACS images, ensuring quality control, and performing worklist triage. Yet the core diagnostic tasks—performing or interpreting imaging procedures (autonomous diagnosis), obtaining patient histories, conferring with clinicians—show zero measurable AI automation. The pattern is clear: AI touches the administrative and documentation periphery, not the diagnostic center. This reflects a deep regulatory and clinical reality: radiologist interpretation remains the authoritative decision point. Report drafting, terminology standardization, and protocol optimization are fair game. Diagnosis is not. Score: 8/20. Minimal coverage depth; broad surface exposure but hollow at the core.

2. AI Tool Maturity — 12/20

The radiology AI landscape is mature and well-funded. Aidoc (Series D, $100M+ total funding) deploys AI algorithms across 200+ hospitals to flag critical findings for expedited radiologist review. Viz.ai (Series C+, $250M estimated valuation) integrates directly into hospital PACS and RIS systems at 250+ sites, identifying stroke and pulmonary embolism candidates for radiologist triage. Rad AI (Series B, $50M+ raised) generates structured radiology reports from preliminary findings, cutting report turnaround time. All three products have multiple FDA 510(k) clearances. The tooling is production-ready, enterprise-integrated, and revenue-generating. Yet none attempt autonomous diagnosis. The regulatory and liability barriers are absolute: FDA guidance explicitly requires radiologist review and sign-off for any clinical decision. No clearance path exists for AI-only diagnosis. Malpractice law assigns the duty of interpretation to the radiologist, not the tool vendor. Score: 12/20. High tool maturity and enterprise adoption, but all products stop at the augmentation boundary.

3. Relational & Physical Barrier — 10/20

Radiology is a hybrid role with asymmetric teleradiology adoption. Routine diagnostic cases (chest X-rays, plain radiographs, routine CTs) can be read remotely; boutique imaging centers and teleradiology networks operate at scale. Interventional procedures, complex biopsies, real-time ultrasound, and same-day patient communication require physical presence. Face-to-face frequency is low for pure diagnostic radiologists (4.6/5 O*NET) but essential for interventionalists. Physical proximity to patients is near-zero for diagnostic radiology, high for interventional. The relational barrier is weak compared to surgery or psychiatry, but the workflow dependency on PACS/RIS systems and the requirement for real-time clinician consultation anchors the role. Teleradiology reduces relational friction for routine diagnostic work, but workflow integration and clinical collaboration remain persistent. Score: 10/20. Moderate barrier. Teleradiology-capable for diagnostic work reduces relational friction, but interventional and clinic-integrated roles require physical presence.

4. Industry Change Velocity — 15/20

Radiology is THE most AI-active medical specialty by funding and FDA clearance velocity. The sector's composite velocity score (0.73, based on McKinsey 0.72, CB Insights top-third positioning, Deloitte 0.68) exceeds finance by absolute velocity but trails software. The radiology-specific signals are extraordinary: 400+ cumulative FDA AI/ML clearances across all of medical AI, but radiology accounts for 25-30% of them. Quarterly healthcare AI funding sits at $8.2B. Aidoc and Viz.ai alone represent $350M+ in venture conviction over the past 18 months. Multiple startup exits (Tempus, Zebra Medical Vision) have validated the market. Series D, C+, and B funding across three production-grade tools signals sustained confidence. Radiology departments are moving from "should we adopt AI?" to "which tools do we stack?" Major hospital systems (Mayo, Cleveland Clinic, UCSF) have already deployed AI as standard practice. Score: 15/20. Very high velocity. Radiology is the flagship use case for medical AI. Signal density is extreme.

5. Replaceability vs. Augmentation — 6/20

This is where radiology diverges sharply from other roles. The theoretical replaceability is higher than augmentation would suggest (IMF 0.78, OECD 0.75 both lean augmentation, but only slightly). Yet the regulatory and legal structure makes autonomous AI diagnosis impossible. FDA guidance explicitly requires human radiologist review and interpretation for any clinical decision. No clearance path exists for "AI-only diagnosis." Malpractice liability law assigns the duty of interpretation to the radiologist, not the tool vendor. This creates a hard ceiling: AI can augment, triage, and accelerate, but cannot replace the radiologist's judgment. The market is fully aligned with this boundary—no vendor is pushing for autonomous diagnosis, and no hospital would accept it. Every production AI radiology product positions itself as radiologist-in-the-loop. This regulatory floor is non-negotiable and will not change within any foreseeable horizon. Score: 6/20. Low replaceability. The regulatory and liability floor is absolute and non-negotiable. This is the lowest replaceability score across all occupations in the ShiftProof dataset.

Career AI Prompts: Full Specifications

Prompt 2 — Differential Diagnosis Structure (Radiologist Review)

| Tool | Claude |

| Task | Perform or interpret the outcomes of diagnostic imaging procedures |

| When | Building a differential diagnosis for complex imaging findings |

You are a senior diagnostic radiologist building a structured differential diagnosis framework following BI-RADS (for breast), Lung-RADS (for lung), TI-RADS (for thyroid), or generic imaging standards depending on the exam type. From the imaging finding, clinical context, and applicable standards below, generate a ranked differential diagnosis with: 1. Primary diagnosis (Most likely, with imaging rationale) 2. Differential diagnoses (Ranked by likelihood: High / Moderate / Low) 3. Supporting imaging features (Key findings that support or refute each) 4. Additional imaging or follow-up recommendations (If needed to narrow differential) 5. BI-RADS / Lung-RADS / TI-RADS category (If applicable) Example (breast): PRIMARY DIAGNOSIS: Fibroadenoma - Age-appropriate benign lesion - Well-circumscribed, isoechoic (ultrasound) - No suspicious features DIFFERENTIAL DIAGNOSES: 1. Phyllodes tumor (Low likelihood) - Larger size would be atypical (this is 1.5cm) - No heterogeneous internal echo 2. Invasive ductal carcinoma (Low likelihood) - Sharp borders are atypical for IDC - No surrounding desmoplasia FOLLOW-UP: BI-RADS 2 (benign). Routine screening in 12 months. No additional imaging. --- [PRIMARY FINDING]: [INSERT YOUR IMAGING FINDING] [CLINICAL CONTEXT]: [INSERT AGE, RISK FACTORS, SYMPTOMS] [APPLICABLE STANDARD]: [INSERT BIRADS / LUNGRADS / TIRADS / OTHER] Do not make a clinical diagnosis. Frame all differentials as possibilities for radiologist review. If clinical history is insufficient, flag [CLINICAL HISTORY NEEDED].

Expected result: A structured differential diagnosis framework with ranked diagnoses, imaging rationale, and follow-up recommendations. Ready for radiologist clinical judgment call. Saves 5-10 minutes on differential structuring for complex cases.

Prompt 3 — Quality Assurance Protocol Draft

| Tool | Claude |

| Task | Develop or monitor procedures to ensure adequate quality control of images |

| When | Creating or updating a radiology department QA program |

You are a senior radiology QA specialist drafting a radiology department quality assurance program following ACR (American College of Radiology) Accreditation standards and ACR Practice Parameters for Quality Improvement. From the department scope, regulatory baseline, and current issues below, generate a QA protocol with these sections: 1. SCOPE AND OBJECTIVES (Covered modalities, QA goals, success metrics) 2. IMAGE QUALITY ASSESSMENT (Visual inspection criteria per modality) 3. DOSE MONITORING (Dose tracking, dose reduction strategies, compliance with diagnostic reference levels) 4. EQUIPMENT PERFORMANCE TESTING (Daily, weekly, monthly, annual physics tests) 5. PERSONNEL TRAINING (Initial competency, continuing education requirements for technologists and radiologists) 6. INCIDENT REPORTING (Process for reporting QA failures, corrective actions, documentation) 7. DOCUMENTATION AND RECORDS RETENTION (QA reports, corrective action logs, archival) For each modality (X-ray, CT, MRI, ultrasound): - List the primary image quality metrics - Specify inspection frequency - Define acceptance criteria Example (X-ray): MODALITY: Portable Chest Radiography METRICS: Positioning, exposure, collimation, artifacts FREQUENCY: Daily (first case of the day) + per incident ACCEPTANCE: Collimation within 2% of field size; no artifacts; positioning landmarks visible per ASGE guidelines. --- [DEPARTMENT SCOPE]: [INSERT MODALITIES, VOLUME, FACILITIES] [REGULATORY BASELINE]: [INSERT ACR STANDARDS / STATE / LOCAL REFS] [CURRENT ISSUES] (optional): [INSERT PREVIOUS QA FINDINGS OR DEFICIENCIES] Do not invent physics parameters or dose targets. Reference ACR Practice Parameters for specific numerical criteria. Flag any standard requiring physicist review as [PHYSICIST REVIEW].

Expected result: A department QA protocol framework covering image quality, dose monitoring, equipment testing, training, and incident reporting. Ready for institutional review and adoption. Saves 8-12 hours on initial QA protocol drafting.

Prompt 4 — Treatment Plan Structure (Oncology Radiology)

| Tool | GPT |

| Task | Develop treatment plans for radiology patients |

| When | Building a structured treatment recommendation from diagnostic imaging and biopsy/pathology results |

You are a senior oncology radiologist structuring a treatment recommendation for a tumor board presentation following NCCN (National Comprehensive Cancer Network) guideline format. From the imaging, pathology, patient data, and guidelines below, generate a structured treatment recommendation with: 1. PATIENT SUMMARY (Age, performance status, comorbidities) 2. IMAGING STAGING (TNM stage by imaging, metastatic workup) 3. PATHOLOGY FINDINGS (Histology, grade, molecular markers, prognostic factors) 4. GUIDELINE-CONCORDANT OPTIONS (Primary treatment options per NCCN, ranked by evidence level) 5. RISK STRATIFICATION (Low/intermediate/high risk, with rationale) 6. RECOMMENDED APPROACH (Primary recommendation with alternatives) 7. IMAGING FOLLOW-UP PLAN (Surveillance timing and modality) Example (non-small-cell lung cancer): PATIENT SUMMARY: 65M, PS 1, hypertension, prior smoking history IMAGING STAGING: T3N1M0, Stage IIIA by IASLC PATHOLOGY: Adenocarcinoma, Grade 2, PD-L1 <1%, EGFR wild-type, ALK negative GUIDELINE OPTIONS (NCCN Preferred): 1. Concurrent chemoradiation (Category 1) - 66-70 Gy thoracic RT + platinum-etoposide 2. Surgery + adjuvant chemotherapy (Category 1, if resectable) RISK: Intermediate (N1, lower PD-L1) RECOMMENDATION: Concurrent CRT with reassessment for consolidation immunotherapy per NCCN v7. --- [IMAGING SUMMARY]: [INSERT TUMOR LOCATION, SIZE, STAGE BY IMAGING] [PATHOLOGY REPORT]: [INSERT HISTOLOGY, GRADE, MOLECULAR MARKERS] [PATIENT DATA]: [INSERT AGE, PERFORMANCE STATUS, COMORBIDITIES] [APPLICABLE GUIDELINES]: [INSERT NCCN VERSION / TUMOR BOARD CONSENSUS] Do not override NCCN or institutional guidelines. If patient data conflicts with guideline assumptions, flag ⚠️.

Expected result: A structured treatment recommendation framework ready for tumor board presentation. Captures staging, pathology, risk stratification, and guideline-concordant options. Saves 4-6 hours on tumor board preparation.

Prompt 5 — Equipment Procurement Analysis

| Tool | Claude |

| Task | Provide advice on types or quantities of radiology equipment needed |

| When | Planning departmental equipment purchases or upgrades |

You are a senior radiology department director analyzing equipment procurement needs following ACR Practice Parameters and manufacturer recommendations. From the current inventory, volume forecast, and regulatory requirements below, generate an equipment analysis with: 1. CURRENT INVENTORY ASSESSMENT (Equipment list, age, utilization rates, maintenance status) 2. VOLUME ANALYSIS (Current vs. projected exams per modality, throughput bottlenecks) 3. REGULATORY COMPLIANCE CHECK (ACR accreditation requirements, state licensing, facilities needs) 4. TECHNOLOGY ASSESSMENT (Available modalities, AI integration options, workflow efficiency gains) 5. EQUIPMENT RECOMMENDATIONS (Priority ranking: replace / upgrade / add) 6. IMPLEMENTATION TIMELINE (Installation, downtime impact, staff training needs) 7. FACILITY READINESS (Electrical, shielding, infrastructure requirements) Example: CURRENT INVENTORY: - 2x GE Discovery 64-slice CT (2012, 2015) - 1x Siemens 3T MRI (2008, near end of service) - 5x Radiography rooms (fluoroscopy-capable) VOLUME ANALYSIS: - CT: 8,400 exams/year (current capacity 9,000) - MRI: 3,200 exams/year (current capacity 3,500) - Projected growth: 12% annual (next 3 years) RECOMMENDATIONS: 1. REPLACE (Priority 1): MRI — exceed lifespan, upgrade to 3.0T with AI reconstruction 2. UPGRADE (Priority 2): CT #1 — add dual-energy, iterative reconstruction 3. ADD (Priority 3): Ultrasound room (#6) — capacity gap for OB/GYN demand --- [CURRENT INVENTORY]: [INSERT EQUIPMENT LIST, AGE, UTILIZATION] [VOLUME FORECAST]: [INSERT ANNUAL EXAMS, PROJECTED GROWTH] [REGULATORY REQUIREMENTS]: [INSERT ACR / STATE STANDARDS] Do not estimate costs or request for proposal. Flag facility readiness gaps (electrical, MRI room shielding, etc.) as [FACILITIES REVIEW].

Expected result: An equipment analysis with current inventory assessment, volume projections, compliance check, technology options, and prioritized recommendations. Ready for capital planning. Saves 6-10 hours on equipment procurement planning.

Career Horizon: Your 3–5 Year Path

Short term (0-2 years)

Radiology departments adopt AI-augmented reading workflows as standard practice. Report generation, preliminary triage algorithms, and quality control automation become default tools across the field. Radiologist productivity (reports per hour) increases measurably. The radiologist role does not shrink; the volume-per-radiologist increases. Early-adopting departments gain competitive advantage in turnaround time and report quality consistency.

Medium term (2-5 years)

Worklist triaging becomes AI-first: algorithms automatically flag critical findings and route high-confidence cases to junior radiologists or advanced practitioners, freeing senior radiologists for complex and ambiguous studies. Subspecialty AI tools (cardiac, neuro, musculoskeletal) reach higher maturity and vendor integration. The radiologist shifts from volume interpretation to supervision and complex case adjudication. Teleradiology workflows fully integrate AI, enabling smaller on-call teams to manage larger volumes.

Accelerators

• 400+ cumulative FDA AI/ML clearances in medical imaging; radiology accounts for 25-30%

• Series D+ funding for Aidoc, Viz.ai, and competitors signals sustained venture confidence

• Major hospital systems (Mayo, Cleveland Clinic, UCSF) deploying AI as standard-of-care

Brakes

• Regulatory requirement for radiologist review and sign-off is absolute and non-negotiable

• Liability law assigns diagnostic duty to the radiologist, not the AI vendor

• Subspecialty knowledge (interventional, pediatric, neuroradiology) remains difficult to automate

• Legacy PACS/RIS systems in many hospitals slow AI tool integration

• Training pipeline for new radiologists remains capacity-constrained; AI adoption does not address physician supply shortage

The Bottom Line

Radiology at 5.1/10 is a profession in transformation, not replacement. The score reflects a specialty where AI reshapes the administrative and documentary periphery while the interpretive center remains untouched by law and liability. Every production-ready AI radiology tool is a radiologist-in-the-loop system. No autonomous diagnosis pathway exists, and none is being pursued by vendors or regulators. The strategic move for radiologists is clear: master the AI tools that augment report generation, workflow triage, and quality control. Those tools deliver measurable acceleration (8-15 minutes per report, 10-100x faster simulation, critical finding alerts). Radiologists who adopt these tools early gain productivity advantages and can manage higher case volumes without increasing physical effort. Those who resist will face competitive pressure on the tasks where AI demonstrably delivers value. The diagnostic judgment itself remains the radiologist's domain and will remain so for any foreseeable horizon. The rate-limiting step is not automation. It is the radiologist's time. AI helps manage that resource more efficiently, but does not replace the radiologist who deploys it.

Share this with a colleague. They're probably thinking the same thing about their role.

ShiftProof.ai

Live AI career intelligence.